PDC 2008, Day #2, Keynote #2, 2 hrs

Ray Ozzie, Steven Sinofsky, Scott Guthrie, David Treadwell

Wow. In contrast to yesterday’s keynote, where Windows Azure was launched, today’s keynote was the kind of edge-of-your-seat collection of product announcements that explain why people shell out $1,000+ to come to PDC. The keynote was a 2-hr extravaganza of non-stop announcements and demos.

In short, we got a good dose of Windows 7, as well as new tools in .NET 3.5 SP1, Visual Studio 2008 SP1 and the future release of Visual Studio 2010. Oh yeah—and an intro to Office 14, with online web-based versions of all of your favorite Office apps.

Not to mention a new Paint applet with a ribbon interface. Really.

Ray Ozzie Opening

The keynote started once again today with Ray Ozzie, reminding us of what was announced yesterday—the Azure Services Platform.

Ray pointed out that while yesterday focused on the back-end, today’s keynote would focus on the front-end: new features and technologies from a user’s perspective.

He pointed out that the desktop-based PC and the internet are still two completely separate world. The PC is where we sit when running high-performance close-to-the-metal applications. And the web is how we access the rest of the world, finding and accessing other people and information.

Ray also talked about the phone being the third main device where people spend their time. It’s always with us, so can respond to our spontaneous need for information.

The goal for Microsoft, of course, is that applications try to span all three of these devices—the desktop PC, the web, and the phone. The apps that can do this, says Ozzie, will deliver the greatest value.

It’s no surprise either that Ray mentioned Microsoft development tools as providing the best platform for developing these apps that will span the desktop/web/phone silos.

Finally, Ray positioned Windows 7 as being the best platform for users, since we straddle these three worlds.

Steven Sinofsky

Next up was Steven Sinofsky,Senior VP for Windows and Windows Live Engineering Group at Microsoft. Steven’s part of the keynote was to introduce Windows 7. Here are a couple of tidbits:

- Windows 7 now in pre-beta

- Today’s pre-beta represents “M3”—a feature-complete milestone on the way to Beta and eventual RTM. (The progression is M1/M2/M3/M4/Beta)

- The beta will come out early in 2009

- Release still targeted at 3-yrs after the RTM of Vista, putting it at November of 2009

- Server 2008 R2 is also in pre-beta, sharing its kernel with Windows 7

Steven mentioned three warm-fuzzies that Windows 7 would focus on:

- Focus on the personal experience

- Focus on connecting devices

- Focus on bringing functionality to developers

Julie Larson-Green — Windows 7 Demo

Next up was Julie Larson-Green, Corporate VP, Windows Experience. She took a spin through Windows 7 and showed off a number of the new features and capabilities.

New taskbar

- Combines Alt-Tab for task switching, current taskbar, and current quick launch

- Taskbar includes icons for running apps, as well as non-running (icons to launch apps)

- Can even switch between IE tabs from the taskbar, or close tabs

- Can close apps directly from the taskbar

- Can access app’s MRU lists from the taskbar (recent files)

- Can drag/dock windows on desktop, so that they quickly take exactly half available real estate

Windows Explorer

- New Libraries section

- A library is a virtual folder, providing access to one or more physical folders

- Improved search within a library, i.e. across a subset of folders

Home networking

- Automatic networking configuration when you plug a machine in, connecting to new “Homegroup”

- Automatic configuration of shared resources, like printers

- Can search across entire Homegroup (don’t need to know what machine a file lives on)

Media

- New lightweight media player

- Media center libraries now shared & integrated with Windows Explorer

- Right-click on media and select device to play on, e.g. home stereo

Devices

- New Device Stage window, summarizing all the operations you can perform with a connected device (e.g. mobile device)

- Configure the mobile device directly from this view

Gadgets

- Can now exist on your desktop even without the sidebar being present

Miscellaneous

- Can share desktop themes with other users

- User has full control of what icons appear in system tray

- New Action Center view is central place for reporting on PC’s performance and health characteristics

Multi-touch capabilities

- Even apps that are not touch-aware can leverage basic gestures (e.g. scrolling/zooming). Standard mouse behaviors are automatically mapped to equivalent gestures

- Internet Explorer has been made touch-aware, for additional functionality:

- On-screen keyboard

- Navigate to hyperlink by touching it

- Back/Forward with flick gesture

Applet updates

- Wordpad gets Ribbon UI

- MS Paint gets Ribbon UI

- New calculator applet with separate Scientific / Programmer / Statistics modes

Sinofsky Redux

Sinofsky returned to touch on a few more points for Windows 7:

- Connecting to Live Services

- Vista “lessons learned”

- How developers will view Windows 7

Steve talked briefly about how Windows 7 will more seamlessly allow users to connect to “Live Essentials”, extending their desktop experience to the cloud. It’s not completely clear what this means. He mentioned the user choosing their own non-Microsoft services to connect to. I’m guessing that this is about some of the Windows 7 UI bits being extensible and able to incorporate data from Microsoft Live services. Third party services could presumably also provide content to Windows 7, assuming that they implemented whatever APIs are required.

The next segment was a fun one—Vista “lessons learned”. Steve made a funny reference to all of the feedback that Microsoft has gotten on Vista, including a particular TV commercial. It was meant as a clever joke, but Steve didn’t get that many laughs—likely because it was just too painfully true.

Here are the main lessons learned with Vista. (I’ve changed the verb tense slightly, so that we can read this as more of a confession).

- The ecosystem wasn’t ready for us.

- Ecosystem required lots of work to get to the point where Vista would run on everything

- 95% of all PCs running today are indeed able to run Vista

- Windows 7 is based on the same kernel, so we won’t run into this problem again

- We didn’t adhere to standards

- He’s talking about IE7 here

- IE8 addresses that, with full CSS standards compliance

- They’ve even released their compliance test results to the public

- Win 7 ships with IE8, so we’re fully standards-compliant, out of the box

- We broke application compatibility

- With UAC, applications were forced to support running as a standard user

- It was painful

- We had good intentions and Vista is now more secure

- But we realize that UAC is still horribly annoying

- Most software now supports running as a standard user

- We delivered features, rather than solutions to typical user scenarios

- E.g. Most typical users have no hope of properly setting up a home network

- Microsoft failed to deliver the “last mile” of required functionality

- Much better in Windows 7, with things like automatic network configuration

The read-between-the-lines takeaway is we won’t make these same mistakes with Windows 7. That’s a clever message. The truth is that these shortcomings have basically already been addressed in Vista SP1. So because Windows 7 is essentially just the next minor rev of Vista, it inherits the same solutions.

But there is one shortcoming with Vista that Sinofsky failed to mention—branding. Vista is still called “Vista” and the damage is already done. There are users out there who will never upgrade to Vista, no matter what marketing messages we throw at them. For these users, we have Windows 7—a shiny new brand to slap on top of Vista, which is in fact a stable platform.

This is a completely reasonable tactic. Vista basically works great—the only remaining problem is the perception of its having not hit the mark. And Microsoft’s goal is to create the perception that Windows 7 is everything that Vista was not.

Enough ranting. On to Sinofsky’s list of things that Windows 7 provides for Windows developers:

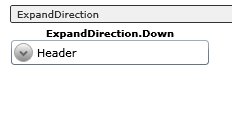

- The ribbon UI

- The new Office ribbon UI element has proved itself in the various Office apps. So it’s time to offer it up to developers as a standard control

- The ribbon UI will also gradually migrate to other Windows/Microsoft applications

- In Windows 7, we now get the ribbon in Wordpad and Paint. (I’m also suspecting that they are now WPF applications)

- Jump lists

- These are new context menus built into the taskbar that applications can hook into

- E.g. For “most recently used” file lists

- Libraries

- Apps can make use of new Libraries concept, loading files from libraries rather than folders

- Multi-touch, Ink, Speech

- Apps can leverage new input mechanisms

- These mechanisms just augment the user experience

- New/unique hardware allows for some amazing experiences

- DirectX family

- API around powerful graphics hardware

- Windows 7 extends the DirectX APIs

Next, Steven moved on to talk about basic fundamentals that have been improved in Windows 7:

Decrease

- Memory — kernel has smaller memory footprint

- Disk I/O — reduced registry reads and use of indexer

- Power — DVD playback cheaper, ditto for timers

Increase

- Speed — quicker boot time, device-ready time

- Responsiveness — worked hard to ensure Start Menu always very responsive

- Scale — can scale out to 256 processors

Yes, you read that correctly—256 processors! Hints of things to come over the next few years on the hardware side. Imagine how slow your single-threaded app will appear to run when running on a 256-core machine!

Sinofsky at this point ratcheted up and went into a sort of but wait, there’s more mode that would put Ron Popeil to shame. Here are some other nuggets of goodness in Windows 7:

- Bitlocker encryption for memory sticks

- No more worries when you lose these

- Natively mount/managed Virtual Hard Drives

- Create VHDs from within Windows

- Boot from VHDs

- DPI

- Easier to set DPI and work with it

- Easier to manage multiple monitors

- Accessibility

- Built-in magnifier with key shortcuts

- Connecting to an external projector in Alt-Tab fashion

- Could possibly be the single most important reason for upgrading to Win 7

- Remote Desktop can now access multiple monitors

- Can move Taskbar all over the place

- Can customize the shutdown button (cheers)

- Action Center allows turning off annoying messages from various subsystems

- New slider that allows user to tweak the “annoying-ness” of UAC (more cheers)

As a final note, Sinofsky mentioned that as developers, we had damn well all be developing for 64-bit platforms. Windows 7 is likely to ship a good percentage of new boxes on x64. (His language wasn’t this strong, but that was the message).

Scott Guthrie

As wilted as we all were with the flurry of Windows 7 takeaways, we were only about half done. Scott Guthrie, VP, Developer Division at Microsoft, came on stage to talk about development tools.

He started by pointing out that you can target Windows 7 features from both managed (.NET) and native (Win32) applications. Even C++/MFC are being updated to support some of the new features in Windows 7.

Scott talked briefly about the .NET Framework 3.5 SP1, which has already released:

- Streamlined setup experience

- Improved startup times for managed apps (up to 40% improvement to cold startup times)

- Graphics improvements, better performance

- DirectX interop

- More controls

- 3.5 SP1 built into Windows 7

Scott then demoed taking an existing WPF application and adding support for Windows 7 features:

- He added a ribbon at the top of the app

- Add JumpList support for MRU lists in the Windows taskbar

- Added Multi-touch support

Scott announced a new WPF toolkit being released this week that includes:

- DatePicker, DataGrid, Calendar controls

- Visual State Manager support (like Silverlight 2)

- Ribbon control (CTP for now)

Scott talked about some of the basics coming in .NET 4 (coming sometime in 2009?):

- Different versions of .NET CLR running SxS in the same process

- Easier managed/native interop

- Support for dynamic languages

- Extensibility Component Model (MEF)

At this point, Scott also starts dabbling in the but wait, there’s more world, as he demoed Visual Studio 2010:

- Much better design-time support for WPF

- Visual Studio itself now rewritten in WPF

- Multi-monitor support

- More re-factoring support

- Better support for Test Driven Development workflow

- Can easily create plugins using MEF

Whew. Now he got to the truly sexy part—probably the section of the keynote that got the biggest reaction out of the developer crowd. Scott showed off a little “third party” Visual Studio plug-in that pretty-formatted XML comments (e.g. function headers) as little graphical WPF widgets. Even better, the function headers, now graphically styled, also contained hot links right into a local bug database. Big cheers.

Sean’s prediction—this will lead to a new ecosystem for Visual Studio plugins and interfaces to other tools.

Another important takeaway—MEF, the new extensibility framework, isn’t just for Visual Studio. You can also use MEF to extend your own applications, creating your own framework.

Tesco.com Demo of Rich WPF Client Application

Here we got our obligatory partner demo, as a guy from Tesco.com showed off their snazzy application that allowed users to order groceries. Lots of 2D and 3D graphical effects—one of the more compelling WPF apps that I’ve seen demoed.

Scott Redux

Scott came back out to talk a bit about new and future offerings on the web development side of things.

Here are some of the ASP.NET improvements that were delivered with .NET 3.5 SP1:

- Dynamic Data

- REST support

- MVC (Model-View-Controller framework)

- AJAX / jQuery (with jQuery intellisense in Visual Studio 2008)

ASP.NET 4 will include:

- Web Forms improvements

- MVC improvements

- AJAX improvements

- Richer CSS support

- Distributed caching

Additionally, Visual Studio 2010 will include better support for web development:

- Code-focused improvements (??)

- Better JavaScript / AJAX tooling

- Design View CSS2 support

- Improved publishing and deployment

Scott then switched gears to talk about new and future offerings for Silverlight.

Silverlight 2 was just RTM’d two weeks ago. Additionally, Scott presented two very interesting statistics:

- Silverlight 1 is now present on 25% of all Internet-connected machines

- Silverlight 2 has been downloaded to 100 million machines

IIS will inherit the adaptive (smooth) media streaming that was developed for the NBC Olympics web site. This is available today.

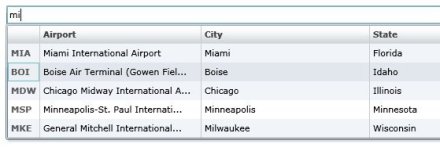

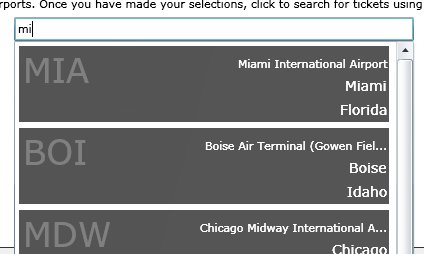

A new Silverlight toolkit is being released today, including:

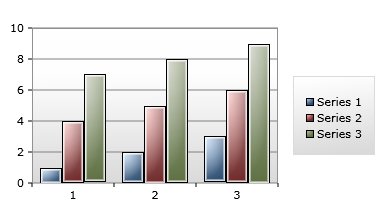

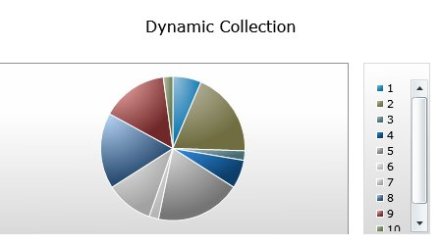

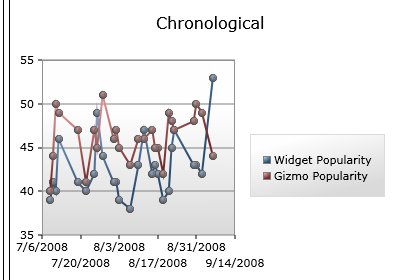

- Charting controls, TreeView, DockPanel, WrapPanel, ViewBox, Expander, NumericUpDown, AutoComplete et al

- Source code will also be made available

Visual Studio 2010 will ship with a Silverlight 2 designer, based on the existing WPF designer.

We should also expect a major release of Silverlight next year, including things like:

- H264 media support

- Running Silverlight applications outside of the browser

- Richer graphics support

- Richer data-binding support

Whew. Take a breath..

David Treadwell – Live Services

While we were all still reeling from Scott Gu’s segment, David Treadweall, Corporate VP, Live Platform Services at Microsoft, came out to talk about Live Services.

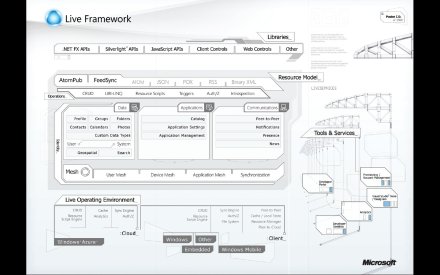

The Live Services offerings are basically a set of services that allow applications to interface with the various Windows Live properties.

The key components of Live Services are:

- Identity – Live ID and federated identity

- Directory – access to social graph through a Contacts API

- Communication & Presence – add Live Messenger support directly to your web site

- Search & Geo-spatial – including mashups on your web sites

The Live Services are all made available via standards-based protocols. This means that you can invoke them from not only the .NET development world, but also from other development stacks.

David talked a lot about Live Mesh, a key component of Live Services:

- Allows applications to bridge Users / Devices / Applications

- Data synchronization is a core concept

Applications access the various Live Services through a new Live Framework:

- Set of APIs that allow apps to get at Live Services

- Akin to CLR in desktop environment

- Live Framework available from PC / Web / Phone applications

- Open protocol, based on REST, callable from anything

Ori Amiga Demo

Ori Amiga came out to do a quick demonstration of how to “Meshify” an existing application.

The basic idea of Mesh is that it allows applications to synchronize data across all of a user’s devices. But importantly, this means—for users who have already signed up for Live Mesh.

Live Mesh supports storing the user’s data “in the cloud”, in addition to on the various devices. But this isn’t required. Applications could use Mesh merely as a transport mechanism between instances of the app on various devices.

Takeshi Numoto – The Closer

Finally, Takeshi Numoto, GM, Office Client at Microsoft, came out to talk about Office 14.

Office 14 will deliver Office Web Applications—lightweight versions of the various Office applications that run in a browser. Presumably they can also store all of their data in the cloud.

Takeshi then did a demo that focused a bit more on the collaboration features of Office 14 than on the ability to run apps in the browser. (Running in the browser just works and the GUI looks just like the rich desktop-based GUI).

Takeshi showed off some pretty impressive features and use cases:

- Two users editing the same document at the same time, both able to write to it

- As users change pieces of the document, little graphical widget shows up on the other user’s screen, showing what piece the first user is currently changing. All updated automatically, in real-time

- Changes are pushed out immediately to other users who are viewing/editing the same document

- This works in Word, Excel, and OneNote (at least these apps were demoed)

- Can publish data out to data stores in Live Services

Ray’s Wrapup

Ray Ozzie came back out to wrap everything up. He pointed out that everything we’d seen today was real. He also pointed out that some of these technologies were more “nascent” than others. In other words—no complaints if some bits don’t work perfectly. It seemed an odd note to end on.