PDC 2008, Day #3, Session #1, 1 hr 15 mins

Ori Amiga

My next session dug a bit deeper into the Live Framework and some of the architecture related to building a Live Mesh application.

Ori Amiga was presenting, filling in for Dharma Shukla (who just became a new Dad).

Terminology

It’s still a little unclear what terminology I should be using. In some areas, Microsoft is switching from “Mesh” to just plain “Live”. (E.g. Mesh Operating Environment is now Live Operating Environment). And the framework that you use to build Mesh applications is the Live Framework. But they are still very much talking about “Mesh”-enabled applications.

I think that the way to look at is this:

- Azure is the lowest level of cloud infrastructure stuff

- The Live Operating Environment runs on top of Azure and provides some basic services useful for cloud applications

- Mesh applications run on top of the LOE and provide access to a live.com user’s “mesh”: devices, applications and data that lives inside their mesh

I think that this basically means that you could have an application that makes use of the various Live Services in the Live Operating Environment without actually being a Mesh application. On the other hand, some of the services in the LOE don’t make any sense to non-Mesh apps.

Live Operating Environment (LOE)

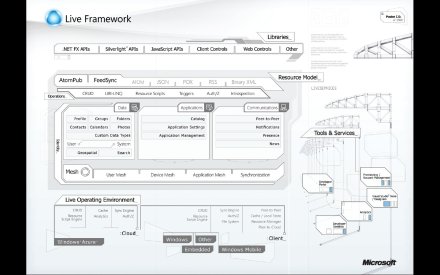

Ori reviewed the Live Operating Environment, which is the runtime that Mesh applications run on top of. Here’s a diagram from Mary Jo Foley’s blog:

This diagram sort of supports my thought that access to a user’s mesh environment is different from the basic stuff provide in the LOE. According to this particular view, Live Services are services that provide access to the “mesh stuff”, like their contact lists, information about their devices, the data stores (data stored in the mesh or out on the devices), and other applications in that user’s mesh.

The LOE would contain all of the other stuff—basic a set of utility classes, akin to the CLR for desktop-based applications. (Oh wait, Azure is supposed to be “akin to the CLR”). *smile*

Ori talked about a list of services that live in the LOE, including:

- Scripting engine

- Formatters

- Resource management

- FSManager

- Peer-to-peer communications

- HTTP communications

- Application engine

- Apt(?) throttle

- Authentication/Authorization

- Notifications

- Device management

Here’s another view of the architecture (you can also find it here).

Also, for more information on the Live Framework, you can go here.

Data in the Mesh

Ori pointed out an important point about how Mesh applications access their data. If you have a Mesh client running on your local PC, and you’ve set up its associated data store to synch between the cloud and that device, the application uses local data, rather than pulling data down from the cloud. Because it’s working entirely with locally cached data, it can run faster than the corresponding web-based version (e.g. running in the Live Desktop).

Resource Scripts

Ori talked a lot about resource scripts and how they might be used by a Mesh-enabled application. An application can perform actions in the Mesh using these resource scripts, rather than performing actions directly in the code.

The resource scripting language contains things like:

- Control flow statements – sequence and interleaving, conditionals

- Web operation statements – to issue HTTP POST/PUT/GET/DELETE

- Synchronization statements – to initiate data synchronization

- Data flow constructs – for binding statements to other statements(?)

Ori did a demo that showed off a basic script. One of the most interesting things was how he combined sequential and interleaved statements. The idea is that you specify what things you need to do in sequence (like getting a mesh object and then getting its children), and what things you can do in parallel (like getting a collection of separate resources). The parallelism is automatically taken care of by the runtime.

Custom Data

Ori also talked quite a bit about how an application might view its data. The easiest thing to do would be to simply invent your own schema and then be the only app that reads/writes the data in that schema.

A more open strategy, however, would be to create a data model that other applications could use. Ori talked philosophically here, arguing that this openness serves to improve the ecosystem. If you can come up with a custom data model that might be useful to other applications, they could be written to work with the same data that your application uses.

Ori demonstrated this idea of custom data in Mesh. Basically you create a serializable class and then mark it up so that it gets stored as user data within a particular DataEntry. (Remember: Mesh objects | Data feeds | Data entries).

This seems like an attractive idea, but it seems a bit clunky. The custom data is embedded into the standard AtomPub stream, but not in a queryable way. It looked more like it was jammed into an XML element in the <DataEntry> element. This means that your custom data items would not be directly queryable.

Ori did go on to admit that custom data isn’t queryable or indexable, but really only for “lightweight data”. This is really at odds with the philosophy of a reusable schema for other applications.

Tips & Tricks

Finally, Ori presented a handful of tips & tricks for working with Mesh applications:

- To clean out local data cache, just delete the DB/MR/Assembler directories and re-synch

- Local metadata is actually sotred in SQL Server Express. Go ahead and peek at it, but be careful not to mess it up.

- Use the Resource Model Browser to really see what’s going on under the covers. What it shows you represents the truth of what’s happening between the client and the cloud

- One simple way to track synch progress is to just look at the size of the Assembler and MR directories

- Collect logs and send to Microsoft when reporting a problem

Summary

Ori finished with the following summary:

- Think of the cloud as just a special kind of device

- There is a symmetric cloud/client programming model

- Everything is a Resource