BUILD 2014, San Francisco

Keynote 2 – Scott Guthrie, Rick Cordella (NBC), Mark Russinovich, Luke Kanies (Puppet), Daniel Spurling (Getty), Mads Kristensen, Yavor Georgiev, Grant Peterson (DocuSign), Anders Hejlsberg, Miguel de Icaza (Xamarin), Bill Staples, Steve Guggenheimer, John Shewchuk

Day 2, 3 Apr 2014, 8:30AM-11:30AM

Disclaimer: This post contains my own thoughts and notes based on watching BUILD 2014 keynotes and presentations. Some content maps directly to what was originally presented. Other content is paraphrased or represents my own thoughts and opinions and should not be construed as reflecting the opinion of Microsoft or of the presenters or speakers.

Scott Guthrie – EVP, Cloud + Enterprise

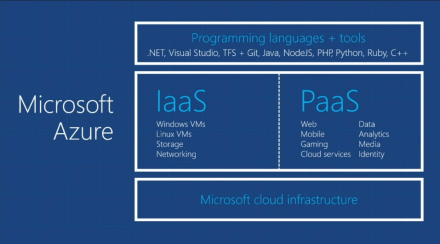

Azure

- IaaS and PaaS

- Windows & Linux

- Developer productivity

- Tons of new features in 2013

- Lots more new features for 2014

Expanding Azure around world (green circles are Azure regions):

Run apps closer to your customers

Some stats:

Did he just say 1,000,000 SQL Server databases? Wow

Great experiences that use Azure

Video of Titanfall / Azure

- Data centers all over

- Spins up dedicated server for you when you play

- “Throw ’em a server” – constantly available set of servers

- AI & NPCs powered by server

Scale

- Titanfall had >100,000 VMs deployed/running on launch day

Olympics NBC Sports (Sochi)

- NBC used Azure to stream games

- 100 million viewers

- Streaming/encoding done w/Azure

- Live-encode across multiple Azure regions

- >2.1 million concurrent viewers (online HD streaming)

Olympics video:

Generic happy Olympics video here

Rick Cordella – NBC Sports (comes out to chat with Scott)

Scaling

- All the way from lowest demand event—curling (poor Curling)

- Like that Scott has to prompt this guy—”how important is this to NBC”?

- Scott—”I’m glad it went well”

Just Scott again

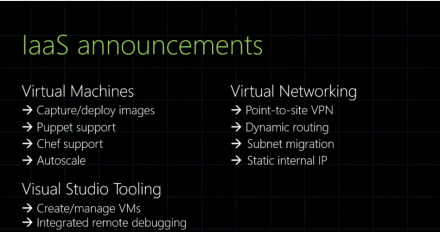

Virtual Machines

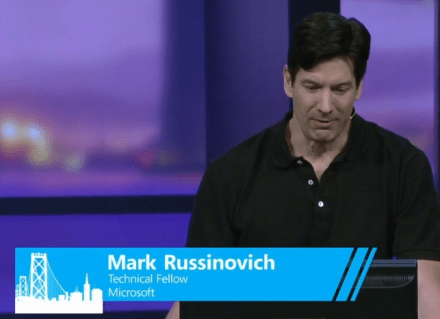

Mark Russinovich – Technical Fellow

Demo of creating VM from Visual Studio

-

Create VM

- Deploy into existing cloud service

- Pick storage account

- Configure network ports

-

Debug VMs from Visual Studio on desktop

- E.g. Client & web service

- Switch to VM running web service

- Set breakpoint in web service

- Connect VS to machine in cloud

- Enable debugging on VM

- Rt-click on VM, Attach Debugger, pick process

- Hit breakpoint, on running (live) service

- This is great stuff..

-

Create copy of VM with multiple data disks

- Save-AzureVMImage cmdlet => capture to VM image

- Then provision new instance from previous VM image

- Very fast to provision new VM—based on simple Powershell cmd

-

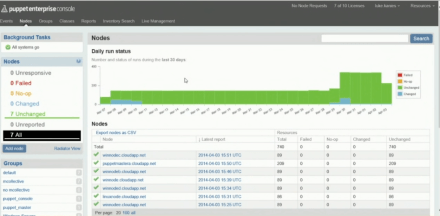

Integration with CM, e.g. Puppet

- Create Puppet Masters from VM

- Puppet Labs | Puppet Enterprise server template when creating VM

- Create client and install Puppet Enterprise Agent into new client VM; point it to puppet master

- Deploying code into VMs from Puppet Master – Luke Kanies (Puppet Labs)

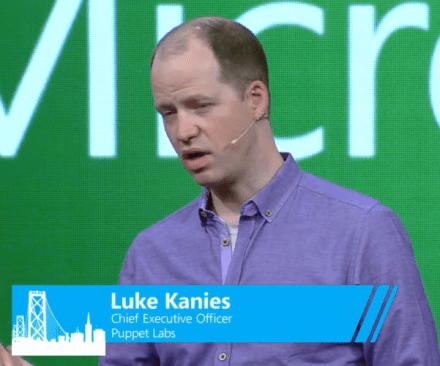

Luke Kanies – CEO, Puppet Labs

Puppet works on virtually any type of device

- Tens of millions of machines managed by Puppet

- Clients: NASA, GitHub, Intel, MBNA, et al

Example of how Puppet works

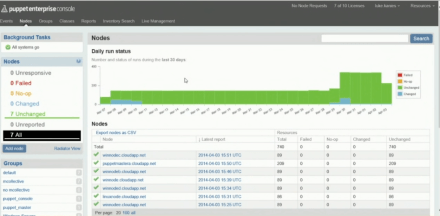

Puppet demo

- Puppet module, attach to machines from enterprise console

- Deploy using this module

- Goal is to get speed of configuration as fast as creation of VMs

Daniel Spurling – Getty Images

How is this (Azure) being used at Getty?

- New way for consumer market to use images for non-commercial use

- The technology has to scale, to support “massive content flow”

- They uses Azure & Puppet

- Puppet – automation & configuration management

- Burst from their data center to external cloud (Azure only for extra traffic)?

Back to Scott Guthrie

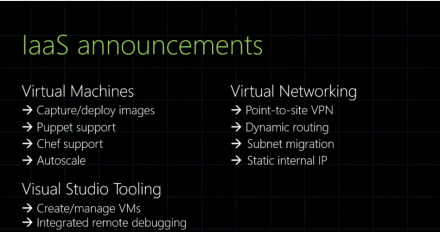

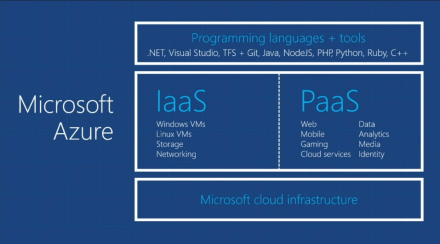

Summary of IaaS stuff:

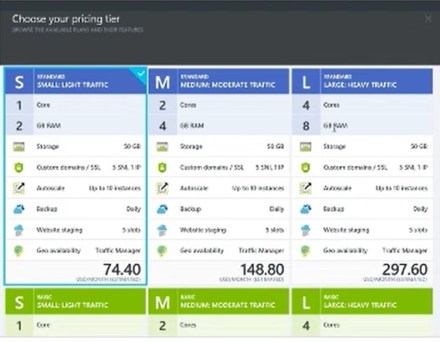

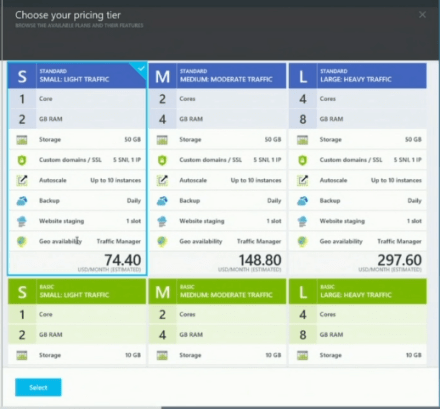

Also provide pre-built services and runtime environments (PaaS)

- Focus on application and not infrastructure

- Azure handles patching, load balancing, autoscale

Web functionality

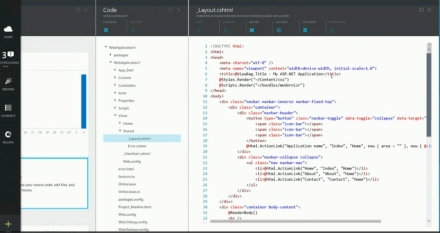

Demo – Mads Kristensen

Mads Kristensen

ASP.NET application demo

- PowerShell editor in Visual Studio

- Example—simple site with some animated GIFs (ClipMeme)

- One way to do this—from within Browser development tools, change CSS, then replicate in VS

-

Now—do change in Visual Studio

- It automatically syncs with dev tools in browser

- BrowserLink

- Works for any browser

- If you change in browser tools in one browser, it gets automatically replicated in VS & other tools

-

Put Chrome in design mode

- As you hover, VS goes to the proper spot in the content

- Make change in browser and it’s automatically synched back to VS

- Example of editing some AngularJS

-

Publish – to Staging

- Publishes just changes

- “-staging” as part of URL (is this configurable? Or can external users hit staging version of site)

-

Then Swap when you’re ready to officially publish

- Staging stuff over to production

-

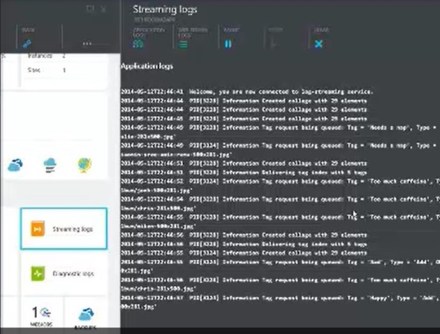

WebJobs

- Run background task in same context as web site

- Section in Azure listing them

- Build as simple C# console app

- Associate this WebJob with a web site

- In Web app in VS, associate to WebJob

- Dashboard shows invocation of WebJob, with return values (input, output, call stack)

- (No applause??)

-

Traffic Manager

- Performance / Round Robin / Failover

- Failover—primary node and secondary node

- Pick endpoints

- Web site says “you are being served from West US”—shows that we hit appropriate region

Back to Scott

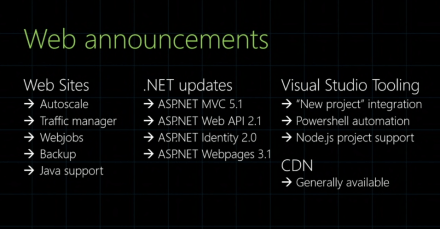

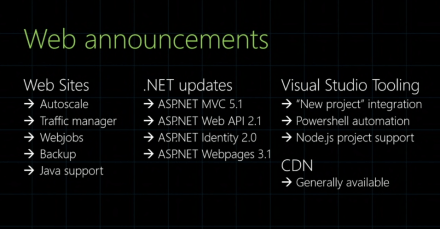

Summary of Web stuff:

SSL

- Including SSL cert with every web site instance (don’t have to pay Verisign)

ASP.NET MVC 5.1

Every Azure customer gets 10 free web sites

Mobile Services

Yavor Georgiev

Demo – Mobile Services

Mobile Service demo

- New template for Mobile Service (any .NET language)

- Built on Web API

- E.g. ToDoItem and ToDoItemController

- Supports local development

- Test client in browser

- Local / Remote debugging work with Mobile Services

Demo – building app to report problem with Facilities

Another great Microsoft demo line: “it’s just that easy”

More demo, Xamarin

- Portable library, reuse with Xamarin

- iOS project in Visual Studio

- Run iPhone simulator from iOS

- Switch to paired Mac

- Same app on iOS, using portable class library

Yavor has clearly memorized his presentation—nice job, but a bit mechanical

Back to Scott alone

Azure Active Directory service

- Active Directory in the cloud

- Can synch with on premises Active Directory

- Single sign-on with enterprise credentials

- Then reuse token with Office 365 stuff

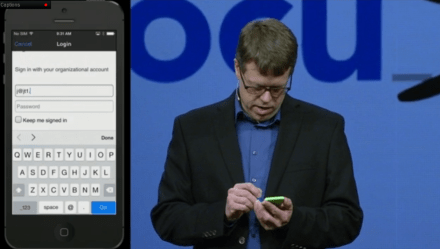

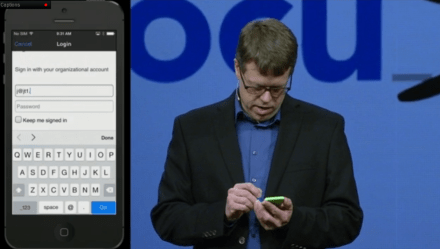

Grant Peterson – CTO, DocuSign

Demo – DocuSign

- Service built entirely on Microsoft stack (SQL Server, C#, .NET, IIS)

- Take THAT, iPhone app!

- 3,000,000 downloads on iPhone so far

- Can now authenticate with Active Directory

- Then can send a document, etc.

- Pull document up from SharePoint, on iPhone, and sign the document

- He draws signature into document

- Then saves doc back to SharePoint

- His code sample shows that he’s doing Objective C, not C#/Xamarin

Back to Scott

- Offline Data Sync !

- Kindle support

Azure – Data

- SQL Database – >1,000,000 databases now hosted

SQL Server improvements

- Increasing DB size to 500GB (from 150GB)

- New 99.95% SLA

-

Self service restore

- Oops, if you accidentally delete data

- Previously, you had to go to your backups

- Now—automatic backups

- You can automatically rollback based on clock time

- 31 days of backups

- Wow !

- Built-in feature, just there

-

Active geo replication

- Run in multiple regions

- Can automatically replicate

- Can have multiple secondaries in read-only

- You can initiate failover to secondary region

- Multiple regions at least 500 miles away

- Data hosted in Europe stays in Europe (what happens in Brussels STAYS in Brussels)

-

HDInsight

- Big data analytics

- Hadoop 2.2, .NET 4.5

- I love saying “Hadoop”

Let’s talk now about tools

.NET improvements – Language – Roslyn

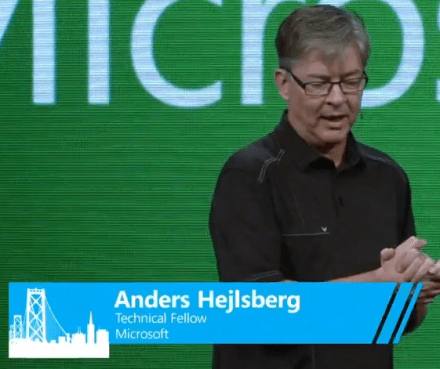

Anders Hejlsberg – Technical Fellow

Roslyn

- Compiler exposed as full API

- C#/VB compilers now written in C# and VB (huh? VB compiler really written in VB??)

Demo – C# 6.0

-

Static usings

- You type “using Math”

- IDE suggests re-factoring to remove type name after we’ve adding using

- Roslyn helps us see preview of re-factored code

- Can rename methods, it checks validity of name

Announcement – open-sourcing entire Roslyn project

- Roslyn.codeplex.com

- Looking at source code—a portion of the source code for the C# compiler

- Anders publishes Roslyn live, on stage ! (that’s classy)

Demo – use Roslyn to implement a new language feature

- E.g. French-quoted string literals

- Lexer – tokenizing source code

- ScanStringLiteral implementation, add code for new quote character

- That’s incredibly slick..

- Then launch 2nd instance of Visual Studio, running modified compiler

- Holy crap

- Re-factoring also automatically picks up new language feature

Can now use Roslyn compilers on other platforms

Miguel de Icaza – CTO, Xamarin

Demo – Xamarin Studio

- Xamarin Studio can switch to use runtime with Roslyn compiler

- E.g. pick up compiler change that Anders just submitted to Codeplex

Miguel gives a C# t-shirt to Anders—that’s classic

Back to Scott

Open Source

- .NET foundation – dotnetfoundation.org

- All the Microsoft stuff that they’ve put out as open source

- Xamarin contributing various libraries

- This is good—Microsoft gradually more accepting of the open source movement/community

Two more announcements

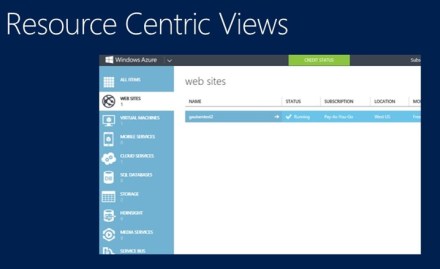

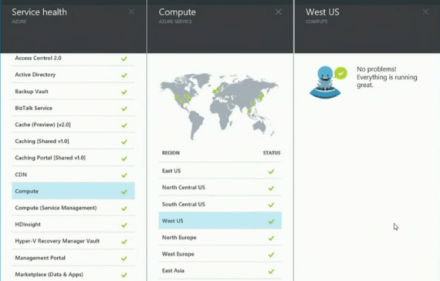

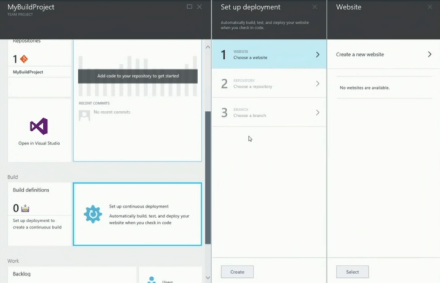

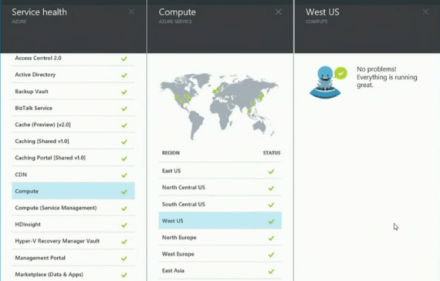

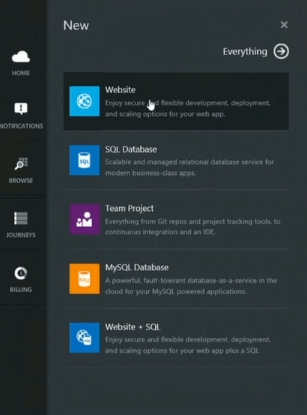

New Azure Portal

- Scott mentions DevOps (a good thing)

- First look at Azure Management Portal

- “Bold reimagining”

Bill Staples – Director of PM, Azure Application Platform

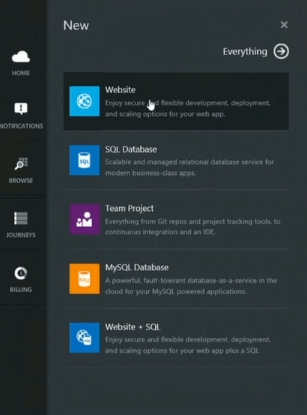

Azure start board

Some “parts” on here by default

- Service health – map

- Can I make this a bit smaller?

- Blade – drilldown into selected object – breadcrumb or “journey”

- Modern navigational structure

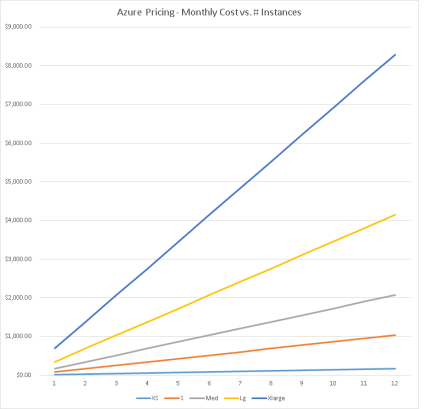

- Number one request—more insight into billing

- “You’re never going to be surprised by bills again”

- Creating instances:

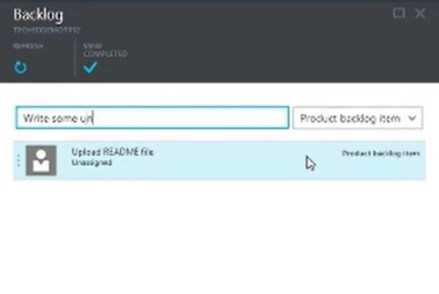

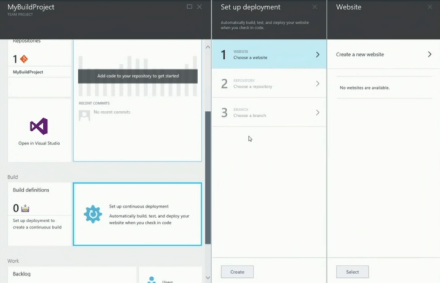

Demo – Set up DevOps lifecycle

- Using same services that Visual Studio Online uses

- Continuous deployment—new web site with project to deploy changes

- Open in Visual Studio

- Commit from Visual Studio—to local repository and repository in cloud

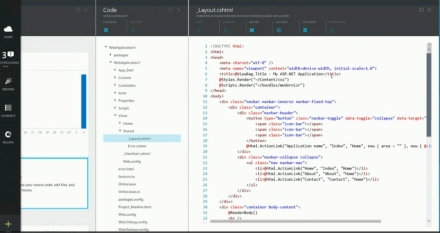

- Drill down into commits and even individual files

- Looking at source code from portal

- Can do commits from here, with no locally installed tools

- Can do diffs between commits

- Auto build and deploy after commit

- “Complete DevOps lifecycle in one experience”

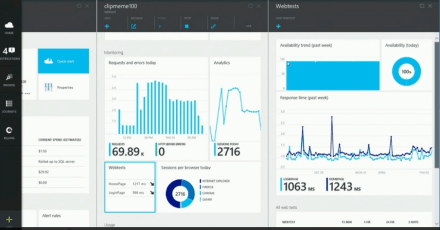

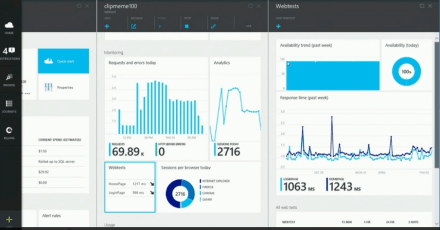

DevOps stuff

- Billing info for the web app

- Aggregated view of operations for this “resource group”

- Topology of app

- Analytics

- Webtests – measure experience from customer’s point of view

- “Average response time that the customer is enjoying”

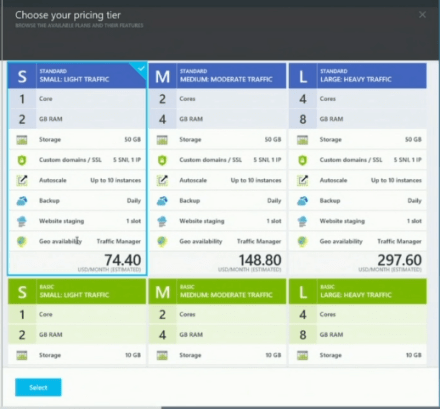

- Can re-scale up to Medium without re-deploying (finally!)

- Database monitoring

- I just have to say, that this monitoring stuff is just fantastic—all of the stuff that I was afraid I’ve have to build myself

- Check Resource Group into source code – “Azure Resource Manager Preview”

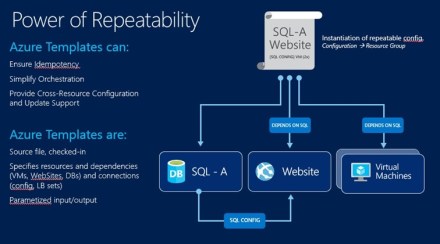

PowerShell – Resource Management Service

- Various templates that you can browse for various resource groups

- E.g. Web Site w/SQL Database

- Basically a JSON file—declarative description of the application

- Can pass the database connection string from DB to web app

- Very powerful stuff

- Can combine these scripts with Puppet stuff

Azure portal on tablet, e.g. Surface

- Or could put on big screen

- Here’s the Office Developer site

- Can see spike in page views, e.g. “what page was that”?

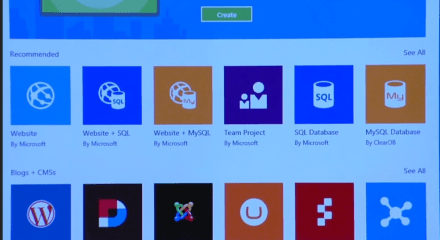

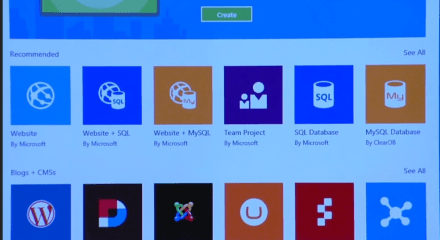

Azure Gallery:

“Amazing DevOps experience”

Back to Scott

Today

Get started – azure.microsoft.com

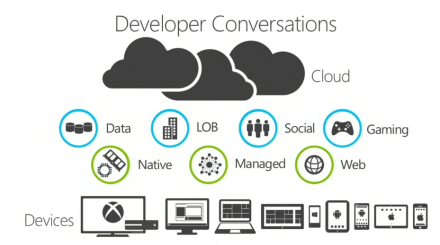

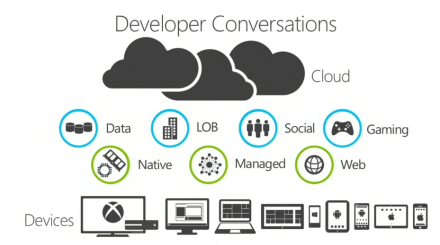

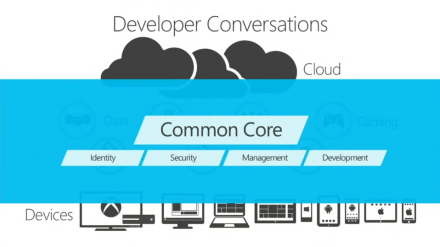

Steve Guggenheimer – Corp VP & Chief Evangelist, Microsoft

Dialog starts with type of app and devices

Areas of feedback

- Help me support existing investments

- Cloud and Mobile first development

- Maximize business opportunities across platforms

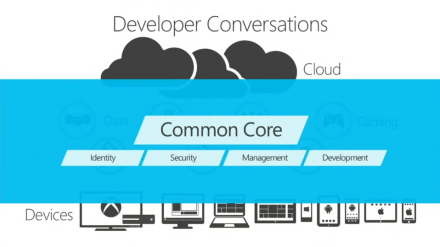

Should at least be very easy to work with “common core”:

“All or none”? — not the case

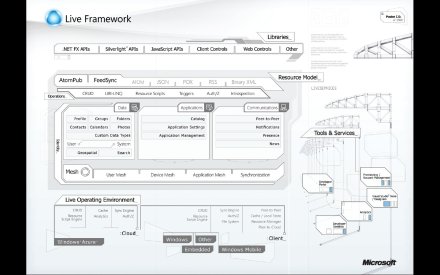

John Shewchuk – Technical Fellow

Support existing technologies

-

Desktop Apps

- WinRT, WPF (e.g. Morgan Stanley)

- Still going to build these apps in WPF

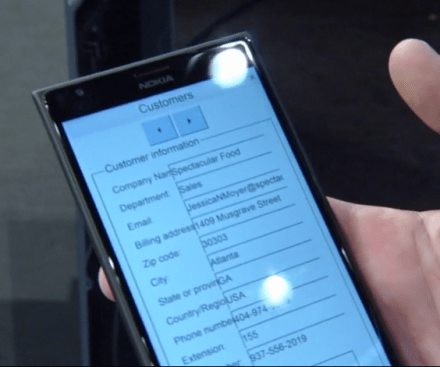

Demo – Typical App, Dental thing

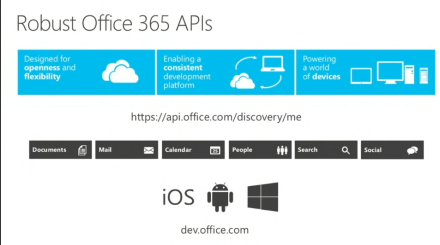

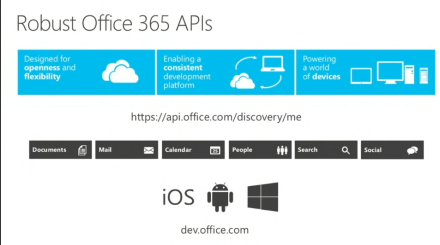

New Office 365 APIs:

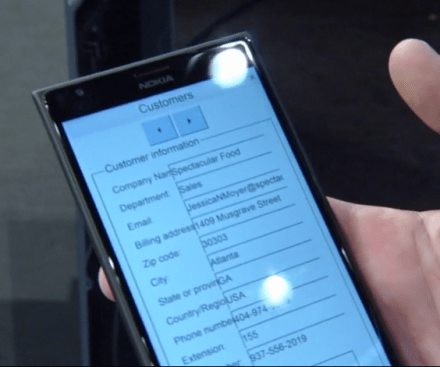

Demo – VB6 app – Sales Agent

Internet of Things:

Demo of Flight app for pilots, running on Surface:

- Value is—the combination of new devices, connecting to existing system

AutoDesk:

- Building complimentary set of services

Flipboard on Windows 8

- Already had good web properties working with HTML5

- Created hybrid app, good native experience

- Brought app to phone (technology preview)

- Nokia 520, Flipboard fast on cheap/simple phone (uses DirectX)

Foursquare – tablet and Windows Phone app

- Win Phone Silverlight 8.1

-

- Run operation goes to see nearby venues

- E.g. Person goes to a geo location and live tile for Foursquare app pops up content

App showing pressure on foot – Heapsylon Sensoria socks

John Gruber – Daring Fireball

- Vesper – Notes app

- Using Mobile Services on Azure

- Wow—we’ve got John Gruber evangelizing about Windows? Whoda-thunkit!

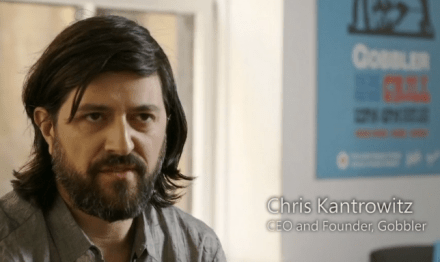

Gobbler – service for musicians and other “Creatives”

- Communication between musicians and collaborator

- DJ/Musician – sending files back and forth without managing data themselves

- Everything on Azure – “everything that we needed was already there”

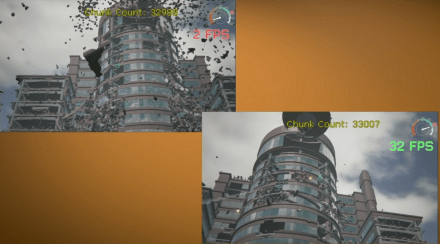

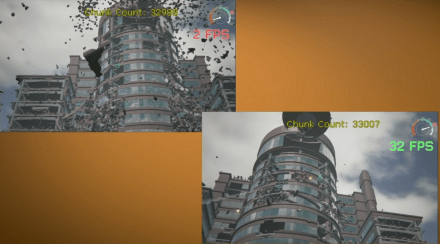

Gaming – PC gaming, cloud assistance

- Destroying building, 3D real-time modeling

- Frame rate drops

- Overwhelms local machine (even high-end gaming gear)

- But then run the same app and use cloud and multiple devices to do cloud computation

- Keep frame rate high

- Computation on cloud, rendering on client (PC)

Cloud-assist on wargaming game on PC:

Demo – WebGL in IE, but on phone:

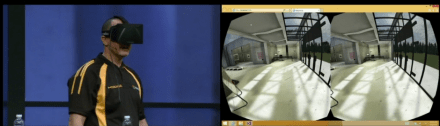

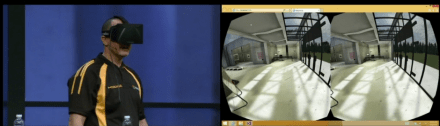

Babylon library (Oculus):

- Oculus rift, on PC, WebGL

- Running at 200Hz

Cross-platform, starting in Windows family, then spreading out

- Fire breathing with a 24 GoPro array

- Video

- Not really clear what’s going on here.. Skiing, guy with tiger, etc.

- What’s the connection to XBox One and Windows 8?

- Ok, In App purchases ?

Doodle God 2 on XBox:

Partnership with Oracle, Java in Azure

- Demo in Azure portal

- Click Java version in web site settings

- Java “incredibly turnkey”

Accela demo

- They want to create app powered by Accela data

-

Split out pieces of URL

- data – from azure-mobile.net

- news – elsewhere

- etc

- Common identity across many services

- Code is out on codeplex

- Application Request Routing (ARR)

Make something in Store available as both web site and app

-

New tool – App Studio – copy web site, expose as App

-

App Studio produces Web App template

- Driven by JSON config file

-

Challenges in wrapping a web site

- What do you do when there is no network?

- App just gets big 404 error

- But you want app responsive both online and offline

-

New feature – offline section in Web App template

- useSuperCache = true

- Store data locally

- Things loaded into local cache

- THEN unplug from network

- Then fully offline, but you can still move around in app, locally cached

-

Do the same thing, app on Windows phone

- Dev has done some responsive layout stuff

-

Then Android device

Zoopla app on Windows Phone

- Can easily take web content, bring to mobile app

- Include offline

Xamarin – Bring Windows app onto iPad

- Windows Universal project

- Runs on iPad, looks like Win 8 app with hub, etc.

- Also running on Android tablet

- How is this working? HTML5?

Stuff available on iOS and Android:

All done!